If you would like to support The Generalist, the #1 thing you can do is share this email with someone you think might like it. Thank you. ❤️

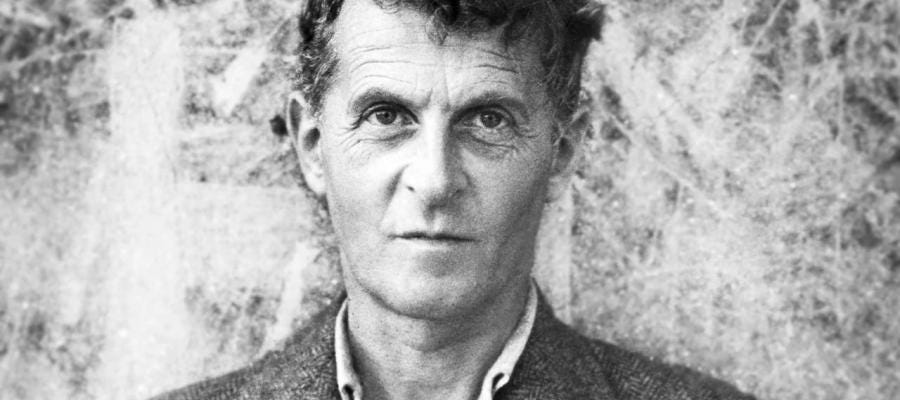

In his work Tractatus Logico Philosophicus, the philosopher Wittgenstein advances a “picture theory of meaning” with regards to language. That position defined language as “atomic,” true only in so much as it reflects the fundamental state of the world. In this sense, Wittgenstein viewed language as a reflection of objective reality, not individual perception.

Thirty-two years later, Wittgenstein would take his previous position to task. In Philosophical Investigations, published in 1953, Wittgenstein unravels the picture theory of Tractatus, arguing that real language is defined by context, and above all, by use. Building on this idea, Wittgenstein introduces the concept of “language games,” a synecdoche for the many ways we use language, and how words stretch and change to fit different circumstances. While in one language game, a term might be used as an order, in another it may be an answer or an exclamation.

One example that Wittgenstein uses to outline his point, is the sentence “Moses did not exist.” He argues that, by itself, this sentence has no meaning (or at the very least uncertain meaning). It could mean that Moses, as a historical figure never walked the earth; it may just as easily mean that the person we think of as Moses went by another name. In either case, meaning comes from the context to which it is appended, not a priori.

I was recently reminded of a Google Sheet that was circulated by a friend of a friend. It lists the often hilarious unforeseen consequences of AI following instructions by the letter. In other words, it shows what happens when language is interpreted as ‘atomic.’ Some of my favorite examples below.

A self-driving car simulator from Udacity is rewarded for its speed. It learns to spin rapidly in circles. (Click the above Tweet to check out a clip. I didn’t include it directly because it’s a little dizzying.)

A simulated pancake-making-robot is rewarded for the number of frames per session. Rather than the robot cooking the pancake in the pan for as long as possible, the robot flings the pancake high into the air to maximize the number of frames. It does not try to catch it.

An artificial life simulator requires organisms to maintain a certain level of energy to stay alive, which can be accumulated by eating. Giving birth takes no energy. An emergent group of “indolent cannibals” respond to these factors by scarcely moving, mating, giving birth, and eating their offspring.

An AI tries to play Tetris. It responds to the point increase of placing a piece by filling the space as quickly as possible, without attempting to make pieces fit together. To avoid losing, it pauses the game before the final piece is played.

These examples, and the many others like them, show us the struggles AI encounters as it tries to mimic human behavior, or respond to prompts within certain “language games.”

Context, and the notion of “meaning as use” is primarily the domain of humans for the time being but it seems likely that forms of synthetic intelligence developed over the coming years, will master this too. Perhaps that will be proof enough that Wittgenstein’s perspectives are not in argument with each other, but merely existing at different points along the same spectrum. It will be in moving from one point to the other that AI succeeds in replicating some of the richness of the brain — its understanding of nuance, tone, and context — before it is surpassed. It is a transition I am excited to follow, though not without concern.